5.1.1 Analog Vs. Digital

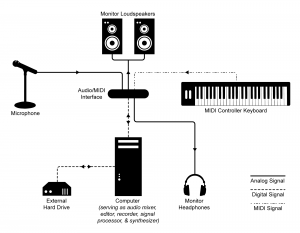

Today’s world of sound processing is quite different from what it was just a few decades ago. A large portion of sound processing is now done by digital devices and software – mixers, dynamics processors, equalizers, and a whole host of tools that previously existed only as analog hardware. This is not to say, however, that in all stages – from capturing to playing – sound is now treated digitally. As it is captured, processed, and played back, an audio signal can be transformed numerous times from analog-to-digital or digital-to-analog. A typical scenario for live sound reinforcement is pictured in Figure 5.1. In this setup, a microphone – an analog device – detects continuously changing air pressure, records this as analog voltage, and sends the information down a wire to a digital mixing console. Although the mixing console is a digital device, the first circuit within the console that the audio signal encounters is an analog preamplifier. The preamplifier amplifies the voltage of the audio signal from the microphone before passing it on to an analog-to-digital converter (ADC). The ADC performs a process called digitization and then passes the signal into one of many digital audio streams in the mixing console. The mixing console applies signal-specific processing such as equalization and reverberation, and then it mixes and routes the signal together with other incoming signals to an output connector. Usually this output is analog, so the signal passes through a digital-to-analog converter (DAC) before being sent out. That signal might then be sent to a digital system processor responsible for applying frequency, delay, and dynamics processing for the entire sound system and distributing that signal to several outputs. The signal is similarly converted to digital on the way into this processor via an ADC, and then back through a DAC to analog on the way out. The analog signals are then sent to analog power amplifiers before they are sent to a loudspeaker, which converts the audio signal back into a sound wave in the air.

A sound system like the one pictured can be a mix of analog and digital devices, and it is not always safe to assume a particular piece of gear can, will, or should be one type or the other. Even some power amplifiers nowadays have a digital signal stage that may require conversion from an analog input signal. Of course, with the prevalence of digital equipment and computerized systems, it is likely that an audio signal will exist digitally at some point in its lifetime. In systems using multiple digital devices, there are also ways of interfacing two pieces of equipment using digital signal connections that can maintain the audio in its digital form and eliminate unnecessary analog-to-digital and digital-to-analog conversions. Specific types of digital signal connections and related issues in connecting digital devices are discussed later in this chapter.

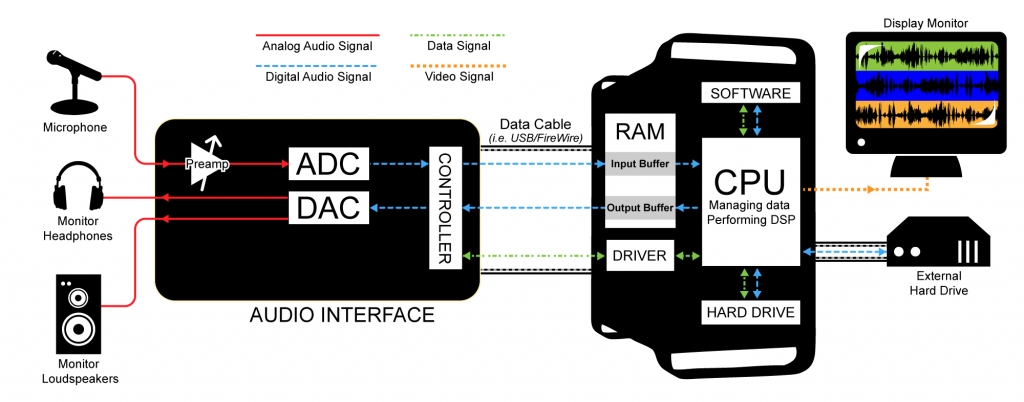

The previous figure shows a live sound system setup. A typical setup of a computer-based audio recording and editing system is pictured in Figure 5.2. While this workstation is essentially digital, the DAW, like the live sound system, includes analog components such as microphones and loudspeakers.

Understanding the digitization process paves the way for understanding the many ways that digitized sound can be manipulated. Let’s look at this more closely.

5.1.2 Digitization

5.1.2.1 Two Steps: Sampling and Quantization

In the realm of sound, the digitization process takes an analog occurrence of sound, records it as a sequence of discrete events, and encodes it in the binary language of computers. Digitization involves two main steps, sampling and quantization.

Sampling is a matter of measuring air pressure amplitude at equally-spaced moments in time, where each measurement constitutes a sample. The number of samples taken per second (samples/s) is the sampling rate. Units of samples/s are also referred to as Hertz (Hz). (Recall that Hertz is also used to mean cycles/s with regard to a frequency component of sound. Hertz is an overloaded term, having different meanings depending on where it is used, but the context makes the meaning clear.)

[aside]It’s possible to use real numbers instead of integers to represent sample values in the computer, but that doesn’t get rid of the basic problem of quantization. Although a wide range of samples values can be represented with real numbers, there is still only a finite number of them, so rounding is still be necessary with real numbers.[/aside]

Quantization is a matter of representing the amplitude of individual samples as integers expressed in binary. The fact that integers are used forces the samples to be measured in a finite number of discrete levels. The range of the integers possible is determined by the bit depth, the number of bits used per sample. A sample’s amplitude must be rounded to the nearest of the allowable discrete levels, which introduces error in the digitization process.

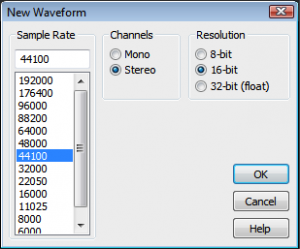

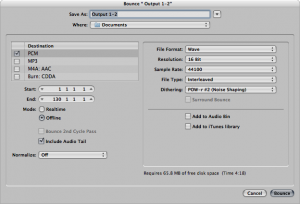

When sound is recorded in digital format, a sampling rate and a bit depth are specified. Often there are default audio settings in your computing environment, or you may be prompted for initial settings, as shown in Figure 5.3. The number of channels must also be specified – mono for one channel and stereo for two. (More channels are possible in the final production, e.g., 5.1 surround.)

A common default setting is designated CD quality audio, with a sampling rate of 44,100 Hz, a bit depth of 16 bits (i.e., two bytes) per channel, with two channels. Sampling rate and bit depth has an impact on the quality of a recording. To understand how this works, let’s look at sampling and quantization more closely.

5.1.2.2 Sampling and Aliasing

[wpfilebase tag=file id=116 tpl=supplement /]

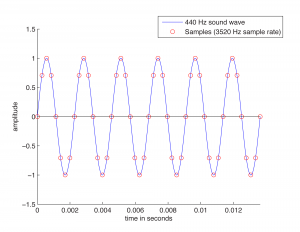

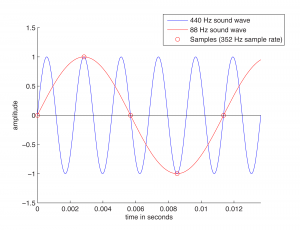

Recall from Chapter 2 that the continuously changing air pressure of a single-frequency sound can be represented by a sine function, as shown in Figure 5.4. One cycle of the sine wave represents one cycle of compression and rarefaction of the sound wave. In digitization, a microphone detects changes in air pressure, sends corresponding voltage changes down a wire to an ADC, and the ADC regularly samples the values. The physical process of measuring the changing air pressure amplitude over time can be modeled by the mathematical process of evaluating a sine function at particular points across the horizontal axis.

Figure 5.4 shows eight samples being taken for each cycle of the sound wave. The samples are represented as circles along the waveform. The sound wave has a frequency of 440 cycles/s (440 Hz), and the sampling rate has a frequency of 3520 samples/s (3520 Hz). The samples are stored as binary numbers. From these stored values, the amplitude of the digitized sound can be recreated and turned into analog voltage changes by the DAC.

The quantity of these stored values that exists within a given amount of time, as defined by the sampling rate, is important to capturing and recreating the frequency content of the audio signal. The higher the frequency content of the audio signal, the more samples per second (higher sampling rate) are needed to accurately represent it in the digital domain. Consider what would happen if only one sample was taken for every one-and-a-quarter cycles of the sound wave, as pictured in Figure 5.5. This would not be enough information for the DAC to correctly reconstruct the sound wave. Some cycles have been “jumped over” by the sampling process. In the figure, the higher-frequency wave is the original analog 440 Hz wave.

When the sampling rate is too low, the reconstructed sound wave appears to be lower-frequency than the original sound (or have an incorrect frequency component, in the case of a complex sound wave). This is a phenomenon called aliasing – the incorrect digitization of a sound frequency component resulting from an insufficient sampling rate.

For a single-frequency sound wave to be correctly digitized, the sampling rate must be at least twice the frequency of the sound wave. More generally, for a sound with multiple frequency components, the sampling rate must be at least twice the frequency of the highest frequency component. This is known as the Nyquist theorem.

[equation]

The Nyquist Theorem

Given a sound with maximum frequency component of f Hz, a sampling rate of at least 2f is required to avoid aliasing. The minimum acceptable sampling rate (2f in this context) is called the Nyquist rate.

Given a sampling rate of f, the highest-frequency sound component that can be correctly sampled is f/2. The highest frequency component that can be correctly sampled is called the Nyquist frequency.

[/equation]

In practice, aliasing is generally not a problem. Standard sampling rates in digital audio recording environments are high enough to capture all frequencies in the human-audible range. The highest audible frequency is about 20,000 Hz. In fact, most people don’t hear frequencies this high, as our ability to hear high frequencies diminishes with age. CD quality sampling rate is 44,100 Hz (44.1 kHz), which is acceptable as it is more than twice the highest audible component. In other words, with CD quality audio, the highest frequency we care about capturing (20 kHz for audibility purposes) is less than the Nyquist frequency for that sampling rate, so this is fine. A sampling rate of 48 kHz is also widely supported, and sampling rates go up as high as 192 kHz.

Even if a sound contains frequency components that are above the Nyquist frequency, to avoid aliasing the ADC generally filters them out before digitization.

Section 5.3.1 gives more detail about the mathematics of aliasing and an algorithm for determining the frequency of the aliased wave in cases where aliasing occurs.

5.1.2.3 Bit Depth and Quantization Error

When samples are taken, the amplitude at that moment in time must be converted to integers in binary representation. The number of bits used for each sample, called the bit depth, determines the precision with which you can represent the sample amplitudes. For this discussion, we assume that you know that basics of binary representation, but let’s review briefly.

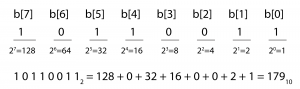

Binary representation, the fundamental language of computers, is a base 2 number system. Each bit in a binary number holds either a 1 or a 0. Eight bits together constitute one byte. The bit positions in a binary number are numbered from right to left starting at 0, as shown in Figure 5.6. The rightmost bit is called the least significant bit, and the leftmost is called the most significant bit. The ith bit is called b[i] .

The value of an n-bit binary number is equal to

[equation caption=”Equation 5.1″]

$$!\sum_{t=0}^{n-1}b\left [ i \right ]\ast 2^{i}$$

[/equation]

Notice that doing the summation from causes the terms in the sum to be in the reverse order from that shown in Figure 5.6. The summation for our example is

$$!10110011_{2}=1\ast 1+1\ast 2+0\ast 4+0\ast 8+1\ast 16+1\ast 32+0\ast 64+1\ast 128=179_{10}$$

Thus, 10110011 in base 2 is equal to 179 in base 10. (Base 10 is also called decimal.) We leave off the subscript 2 in binary numbers and the subscript 10 in decimal numbers when the base is clear from the context.

From the definition of binary numbers, it can be seen that the largest decimal number that can be represented with an n-bit binary number is $$2^{n}-1$$, and the number of different values that can be represented is $$2^{n}$$. For example, the decimal values that can be represented with an 8-bit binary number range from 0 to 255, so there are 256 different values.

These observations have significance with regard to the bit depth of a digital audio recording. A bit depth of 8 allows 256 different discrete levels at which samples can be recorded. A bit depth of 16 allows 216 = 65,536 discrete levels, which in turn provides much higher precision than a bit depth of 8.

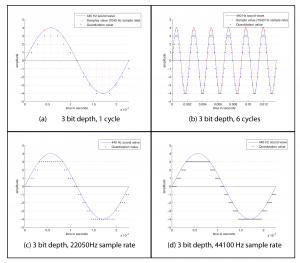

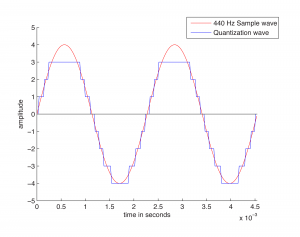

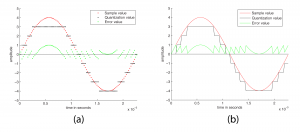

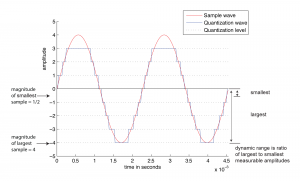

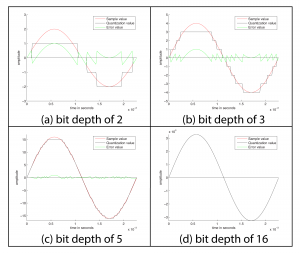

The process of quantization is illustrated Figure 5.7. Again, we model a single-frequency sound wave as a sine function, centering the sine wave on the horizontal axis. We use a bit depth of 3 to simplify the example, although this is far lower than any bit depth that would be used in practice. With a bit depth of 3, 23 = 8 quantization levels are possible. By convention, half of the quantization levels are below the horizontal axis (that is, $$2^{n-1}$$ of the quantization levels). One level is the horizontal axis itself (level 0), and $$2^{n-1}-1$$ levels are above the horizontal axis. These levels are labeled in the figure, ranging from -4 to 3. When a sound is sampled, each sample must be scaled to one of $$2^{n}$$ discrete levels. However, the samples in reality might not fall neatly onto these levels. They have to be rounded up or down by some consistent convention. We round to the nearest integer, with the exception that values at 3.5 and above are rounded down to 3. The original sample values are represented by red dots on the graphs. The quantized values are represented as black dots. The difference between the original samples and the quantized samples constitutes rounding error. The lower the bit depth, the more values potentially must be rounded, resulting in greater quantization error. Figure 5.8 shows a simple view of the original wave vs. the quantized wave.

[wpfilebase tag=file id=117 tpl=supplement /]

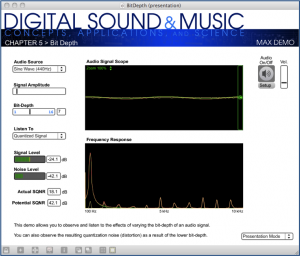

Quantization error is sometimes referred to as noise. Noise can be broadly defined as part of an audio signal that isn’t supposed to be there. However, some sources would argue that a better term for quantization error is distortion, defining distortion as an unwanted part of an audio signal that is related to the true signal. If you subtract the stair-step wave from the true sine wave in Figure 5.8, you get the green part of the graphs in Figure 5.9. This is precisely the error – i.e., the distortion – resulting from quantization. Notice that the error follows a regular pattern that changes in tandem with the original “correct” sound wave. This makes the distortion sound more noticeable in human perception, as opposed to completely random noise. The error wave constitutes sound itself. If you take the sample values that create the error wave graph in Figure 5.9, you can actually play them as sound. You can compare and listen to the effects of various bit depths and the resulting quantization error in the Max Demo “Bit Depth” linked to this section.

For those who prefer to distinguish between noise and distortion, noise is defined as an unwanted part of an audible signal arising from environmental interference – like background noise in a room where a recording is being made, or noise from the transmission of an audio signal along wires. This type of noise is more random than the distortion caused by quantization error. Section 3 shows you how you can experiment with sampling and quantization in MATLAB, C++, and Java programming to understand the concepts in more detail.

5.1.2.4 Dynamic Range

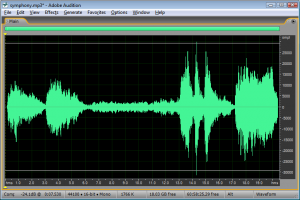

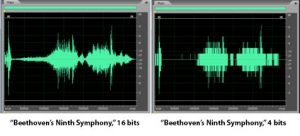

Another way to view the implications of bit depth and quantization error is in terms of dynamic range. The term dynamic range has two main usages with regard to sound. First, an occurrence of sound that takes place over time has a dynamic range, defined as the range between the highest and lowest amplitude moments of the sound. This is best illustrated by music. Classical symphonic music generally has a wide dynamic range. For example, “Beethoven’s Fifth Symphony” begins with a high amplitude “Bump bump bump baaaaaaaa” and continues with a low-amplitude string section. The difference between the loud and quiet parts is intentionally dramatic and is what gives the piece a wide dynamic range. You can see this in the short clip of the symphony graphed in Figure 5.10. The dynamic range of this clip is a function of the ratio between the largest sample value and the magnitude of the smallest. Notice that you don’t measure the range across the horizontal access but from the highest-magnitude sample either above or below the axis to the lowest-magnitude sample on the same side of the axis. A sound clip with a narrow dynamic range has a much smaller difference between the loud and quiet parts. In this usage of the term dynamic range, we’re focusing on the dynamic range of a particular occurrence of sound or music.

[aside]

MATLAB Code for Figure 5.11 and Figure 5.12:

hold on;

f = 440;T = 1/f;

Bdepth = 3; bit depth

Drange = 2^(Bdepth-1); dynamic range

axis = [0 2*T -(Drange+1) Drange+1];

SRate = 44100; %sample rate

sample_x = (0:2*T*SRate)./SRate;

sample_y = Drange*sin(2*pi*f*sample_x);

plot(sample_x,sample_y,'r-');

q_y = round(sample_y); %quantization value

for i = 1:length(q_y)

if q_y(i) == Drange

q_y(i) = Drange-1;

end

end

plot(sample_x,q_y,'-')

for i = -Drange:Drange-1 %quantization level

y = num2str(i); fplot(y,axis,'k:')

end

legend('Sample wave','Quantization wave','Quantization level')

y = '0'; fplot(y,axis,'k-')

ylabel('amplitude');xlabel('time in seconds');

hold off;

[/aside]

In another usage of the term, the potential dynamic range of a digital recording refers to the possible range of high and low amplitude samples as a function of the bit depth. Choosing the bit depth for a digital recording automatically constrains the dynamic range, a higher bit depth allowing for a wider dynamic range.

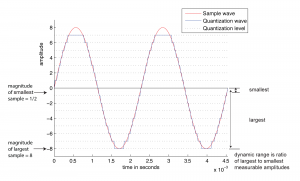

Consider how this works. A digital recording environment has a maximum amplitude level that it can record. On the scale of n-bit samples, the maximum amplitude (in magnitude) would be $$2_{n-1}$$. The question is this: How far, relatively, can the audio signal fall below the maximum level before it is rounded down to silence? The figures below show the dynamic range at a bit depth of 3 (Figure 5.11) compared to the dynamic range at a bit depth of 4 (Figure 5.12). Again, these are non-practical bit depths chosen for simplicity of illustration. The higher bit depth gives a wider range of sound amplitudes that can be recorded. The smaller bit depth loses more of the quiet sounds when they are rounded down to zero or overpowered by the quantization noise. You can see this in the right hand view of Figure 5.13, where a portion of “Beethoven’s Ninth Symphony” has been reduced to four bits per sample.

[wpfilebase tag=file id=40 tpl=supplement /]

The term dynamic range has a precise mathematical definition that relates back to quantization error. In the context of music, we encountered dynamic range as a ratio between the loudest and quietest musical passages of a song or performance. Digitally, the loudest potential passage of the music (or other audio signal) that could be represented would have full amplitude (all bits on). The quietest possible passage is the quantization noise itself. Any sound quieter than that would simply be masked by the noise or rounded down to silence. The ratio between the loudest and quietest parts is therefore the highest (possible) amplitude of the audio signal compared to the amplitude of the quantization noise, or the ratio between the signal level to the noise level. This is what is known as signal-to-quantization-noise-ratio (SQNR), and in this context, dynamic range is the same thing. This definition is given in Equation 5.2.

[equation caption=”Equation 5.2″]

Given a bit depth of n, the dynamic range of a digital audio recording is equal to

$$!20\log_{10}\left ( \frac{2^{n-1}}{1/2} \right )dB$$

[/equation]

You can see from the equation that dynamic range as SQNR is measured in decibels. Decibels are a dimensionless unit derived from the logarithm of the ratio between two values. For sound, decibels are based on the ratio between the air pressure amplitude of a given sound and the air pressure amplitude of the threshold of hearing. For dynamic range, decibels are derived from the ratio between the maximum and minimum amplitudes of an analog waveform quantized with n bits. The maximum magnitude amplitude is $$2^{n-1}$$. The minimum amplitude of an analog wave that would be converted to a non-zero value when it is quantized is ½. Signal-to-quantization-noise is based on the ratio between these maximum and minimum values for a given bit depth. It turns out that this is exactly the same value as the dynamic range.

Equation 5.2 can be simplified as shown in Equation 5.3.

[equation caption=”Equation 5.3″]

$$!20\log_{10}\left ( \frac{2^{n-1}}{1/2} \right )=20\log_{10}\left ( 2^{n} \right )=20n\log_{10}\left ( 2 \right )\approx 6.04n$$

[/equation]

[wpfilebase tag=file id=118 tpl=supplement /]

Equation 5.3 gives us a method for determining the possible dynamic range of a digital recording as a function of the bit depth. For bit depth n, the possible dynamic range is approximately 6n dB. A bit depth of 8 gives a dynamic range of approximately 48 dB, a bit depth of 16 gives a dynamic range of about 96 dB, and so forth.

When we introduced this section, we added the adjective “potential” to “dynamic range” to emphasize that it is the maximum possible range that can be used as a function of the bit depth. But not all of this dynamic range is used if the amplitude of a digital recording is relatively low, never reaching its maximum. Thus, we it is important to consider the actual dynamic range (or actual SQNR) as opposed to the potential dynamic range (or potential SQNR, just defined). Take, for example, the situation illustrated in Figure 5.15. A bit depth of 7 has been chosen. The amplitude of the wave is 24 dB below the maximum possible. Because the sound uses so little of its potential dynamic range, the actual dynamic range is small. We’ve used just a simple sine wave in this example so that you can easily see the error wave in proportion to the sine wave, but you can imagine a music recording that has a low actual dynamic range because the recording was done at a low level of amplitude. The difference between potential dynamic range and actual dynamic range is illustrated in detail in the interactive Max demo associated with this section. This demo shows that, in addition to choosing a bit depth appropriately to provide sufficient dynamic range for a sound recording, it’s important that you use the available dynamic range optimally. This entails setting microphone input voltage levels so that the loudest sound produced is as close as possible to the maximum recordable level. These practical considerations are discussed further in Section 5.2.2.

We have one more related usage of decibels to define in this section. In the interface of many software digital audio recording environments, you’ll find that decibels-full-scale (dBFS) is used. (In fact, it is used in the Signal and Noise Level meters in Figure 5.15.) As you can see in Figure 5.16, which shows amplitude in dBFS, the maximum amplitude is 0 dBFS, at equidistant positions above and below the horizontal axis. As you move toward the horizontal axis (from either above or below) through decreasing amplitudes, the dBFS values become increasingly negative.

The equation for converting between dB and dBFS is given in Equation 5.4

[equation caption=”Equation 5.4″]

For n-bit samples, decibels-full-scale (dBFS) is defined as follows:

$$!dBFS=20\log_{10}\left ( \frac{x}{2^{n-1}} \right )$$

where $$x$$ is an integer sample value between 0 and $$2^{n-1}$$.

[/equation]

Generally, computer-based sample editors allow you to select how you want the vertical axis labeled, with choices including sample values, percentage, values normalized between -1 and 1, and dBFS.

Chapter 7 goes into more depth about dynamics processing, the adjustment of dynamic range of an already-digitized sound clip.

5.1.2.5 Audio Dithering and Noise Shaping

It’s possible to take an already-recorded audio file and reduce its bit depth. In fact, this is commonly done. Many audio engineers keep their audio files at 24 bits while they’re working on them, and reduce the bit depth to 16 bits when they’re finished processing the files or ready to burn to an audio CD. The advantage of this method is that when the bit depth is higher, less error is introduced by processing steps like normalization or adjustment of dynamics. Because of this advantage, even if you choose a bit depth of 16 from the start, your audio processing system may be using 24 bits (or an even higher bit depth) behind the scenes anyway during processing, as is the case with Pro Tools.

Audio dithering is a method to reduce the quantization error introduced by a low bit depth. Audio dithering can be used by an ADC when quantization is initially done, or it can be used on an already-quantized audio file when bit depth is being reduced. Oddly enough, dithering works by adding a small amount of random noise to each sample. You can understand the advantage of doing this if you consider a situation where a number of consecutive samples would all round down to 0 (i.e., silence), causing breaks in the sound. If a small random amount is added to each of these samples, some round up instead of down, smoothing over those breaks. In general, dithering reduces the perceptibility of the distortion because it causes the distortion to no longer follow exactly in tandem with the pattern of the true signal. In this situation, low-amplitude noise is a good trade-off for distortion.

Noise shaping is a method that can be used in conjunction with audio dithering to further compensate for bit-depth reduction. It works by raising the frequency range of the rounding error after dithering, putting it into a range where human hearing is less sensitive. When you reduce the bit depth of an audio file in an audio processing environment, you are often given an option of applying dithering and noise shaping, as shown in Figure 5.17 Dithering can be done without noise shaping, but noise shaping is applied only after dithering. Also note that dithering and noise shaping cannot be done apart from the requantization step because they are embedded into the way the requantization is done. A popular algorithm for dithering and noise shaping is the proprietary POW-r (Psychoacoustically Optimized Wordlength Reduction), which is built into Pro Tools, Logic Pro, Sonar, and Ableton Live.

Dithering and noise shaping are discussed in more detail in Section 5.3.7.

5.1.3 Audio Data Streams and Transmission Protocols

Audio data passed from one device to another is referred to as a stream. There are several different formats for transmitting a digital audio stream between devices. In some cases, you might want to interconnect two pieces of equipment digitally, but they don’t offer a compatible transmission protocol. The best thing to do is make sure you purchase equipment that is compatible with the equipment you already have. In order to succeed at this, you’ll need to understand and be able to identify the various options for digital audio transmission.

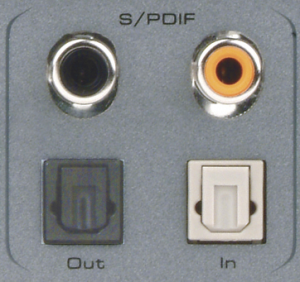

The most common transmission protocol, particularly in consumer-grade equipment is the Sony/Phillips Digital Interconnect Format (S/PDIF). With S/PDIF you can transmit two channels on a single wire. Typically this means you can transmit both the left and right channels of a stereo pair using a single cable instead of two cables required for analog transmission. S/PDIF can be transmitted electrically or optically. Electrical transmission involves RCA (Radio Corporation of America) connectors and a low loss, high bandwidth coaxial cable. This cable is different from the cable you would use for analog transmission. For S/PDIF you need a cable like what is used for video. S/PDIF transmits the digital data electrically in a stream of square wave pulses. Using cheap, low bandwidth cable can result in a loss of high frequency content that can ultimately lose the square wave form, resulting in data loss. When looking for a cable for electrical S/PDIF transmission, look for something with RCA connectors on each end and an impedance of 75 W.

S/PDIF can also be transmitted optically using an optical cable with TOSLINK (TOShiba-LINK) connectors. Optical transmission has the advantage of being able to run longer distances without the risk of signal loss, and it is not susceptible to electromagnetic interference like an electrical signal. Optical cables are more easily broken so if you plan to move your equipment around, you should invest in an optical cable that has good insulation.

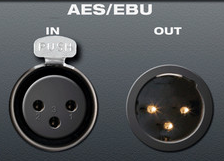

S/PDIF is considered a consumer grade transmission protocol. S/PDIF has a professional grade cousin called AES3, more commonly known as AES/EBU (Audio Engineering Society/European Broadcasting Union). The actual format of the digital stream is almost identical. The main differences are the type of cable and connectors used and the maximum distance you can reliably transmit the signal. AES/EBU can be run electrically as a balanced signal using three pin XLR connectors with a 110 W twisted pair cable or unbalanced using BNC connectors a with a 75 W coaxial cable. The unbalanced version has a maximum transmission distance of 1000 meters as opposed to the 100 meters maximum for the balanced version. The balanced signal is by far the most common implementation as shown in Figure 5.19.

If you need to transmit more than two channels of digital audio between devices, there are several options available. The most common is the ADAT Optical Interface (Alesis Digital Audio Tape). Alesis developed the system to allow signal transfer between their eight-track digital audio tape recorders, but the system has since been widely adopted for multi-channel digital signal transmission between devices at short distances. ADAT can transmit eight channels of audio at sampling rates up to 48 kHz or four channels at sampling rates up to 96 kHz. ADAT uses the same optical TOSLINK cable used for S/PDIF. This makes it relatively inexpensive for the consumer. However, the protocol must be licensed from Alesis if a manufacturer wants to implement it in their equipment.

There are several other emerging standards for multi-channel digital audio transmission, more than we can cover in the scope of this chapter. What is important to know is that most protocols allow digital transmission of 64 channels or more of digital audio over long distances using fiber optic, or CAT-5e cable. Examples of this kind of transmission include MADI, AVB, CobraNet, A-Net, and mLAN. If you need this level of functionality, you will likely be able to purchase interface cards that use these protocols for most computers and digital mixing consoles.

5.1.4 Signal Path in an Audio Recording System

In Section 5.1.1, we illustrated a typical setup for a computer-based digital audio recording and editing system. Let’s look at this more closely now, with special attention to the signal path and conversions of the audio stream between analog and digital forms.

Figure 5.20 illustrates a recording session where a singer is singing into a microphone and monitoring the recording session with headphones. As the audio stream makes its way along the signal path, it passes through a variety of hardware and software, including the microphone, audio interface, audio driver, CPU, input and output buffers (which could be hardware or software), and hard drive. The CPU (central processing unit) is the main hardware workhorse of a computer, doing the actual computation that is required for tasks like accepting audio streams, running application programs, sending data to the hard drive, sending files to the printer, and so forth. The CPU works hand-in-hand with the operating system, which is the software program that manages which task is currently being worked on, like a conductor conducting an orchestra. Under the direction of the operating system, the CPU can give little slots of times to various processes, swapping among these processes very quickly so that it looks like each one is advancing normally. This way, multiple processes can appear to be running simultaneously.

Now let’s get back to how a recording session works. During the recording session, the microphone, an analog device, receives the audio signal and sends it to the audio interface in analog form. The audio interface could be an external device or an internal sound card from GSEAV. The audio interface performs analog-to-digital conversion and passes the digital signal to the computer. The audio signal is received by the computer in a digital stream that is interpreted by a driver, a piece of software that allows two hardware devices to communicate. When an audio interface is connected to a computer, an appropriate driver must be installed in the computer so that the digital audio stream generated by the audio interface can be understood and located by the computer.

The driver interprets the audio stream and sends it to an audio input buffer in RAM. Saying that the audio buffer is in RAM implies that this is a software buffer. (The audio interface has a small hardware buffer as well, but we don’t need to go to this level of detail.) The audio input buffer provides a place where the audio data can be held until the CPU is ready to process it. Buffering of audio input is necessary because the CPU may not be able to process the audio stream as soon as it comes into the computer. The CPU has to handle other processes at the same time (monitor displays, operating system tasks, etc.). It also has to make a copy of the audio data on the hard disk because the digitizing and recording process generates too much data to fit in RAM. Moving back and forth to the hard drive is time consuming. The input buffer provides a holding place for the data until the CPU is ready for it.

Let’s follow the audio signal now to its output. In Figure 5.20, we’re assuming that you’re working on the audio in a program like Sonar or Logic. These audio processing programs serve as the user interface for the recording process. Here you can specify effects to be applied to tracks, start and stop the recording, and decide when and how to save the recording in permanent storage. The CPU performs any digital signal processing (DSP) called for by the audio processing software. For example, you might want reverb added to the singer’s voice. The CPU applies the reverb and then sends the processed data to the software output buffer. From here the audio data go back to the audio interface to be converted back to analog format and sent to the singer’s headphones or a set of connected loudspeakers. Possibly, the track of the singer’s voice could be mixed with a previously recorded instrumental track before it is sent to the audio interface and then to the headphones.

[wpfilebase tag=file id=119 tpl=supplement /]

For this recording process to run smoothly — without delays or breaks in the audio – you must choose and configure your drivers and hard drives appropriately. These practical issues are discussed further in Section 5.2.3.

Figure 5.20 gives an overview of the audio signal path in one scenario, but there are many details and variations that have not been discussed here. We’re assuming a software system such as Logic or Sonar is providing the mixer and DSP, but it’s possible for additional hardware to play these roles – a hardware mixing console, equalizer, or dynamics compressor, for example. Some professional grade software applications may even have additional external DSP processors to help offload some of the work from the CPU itself, such as with Pro Tools HD. This external gear could be analog or digital, so additional DAC/ADC conversions might be necessary. We also haven’t considered details like microphone pre-amps, loudspeakers and loudspeaker amps, data buses, and multiple channels. We refer the reader to the references at the end of the chapter for more information.

5.1.5 CPU and Hard Drive Considerations

As you work with digital audio, it’s important that you have some understanding of the demands on your computer’s CPU and hard drive. You might at times face problems saying drive corrupted or drive is not accessible, during such times make sure to contact a data recovery specialist with having yourself perform tasks trying to recover the data, as even a single step can lead to the file being completely erased from your system.

In general, the CPU is responsible for running all active processes on your computer. Processes take turns getting slices of time from the CPU so that they can all be making progress in their execution at the same time. You might have processes running on your computer at the same time you’re recording and not even be aware of it. For example, you may have automatic software updates activated. What if an automatic update tries to run while audio capture is in progress? The CPU is going to have to take some time to deal with that. If it’s dealing with a software update, it isn’t dealing with your audio stream.

Even if you make your best effort to turn off all other processes during recording, the CPU still has other important work to do that keeps it from returning immediately to the audio buffer. One of the CPU’s most important responsibilities during audio recording is writing audio data out to the hard drive. To understand how this works, it may be helpful to review briefly the different roles of RAM and hard disk memory with regard to a running program.

Ordinarily, we think of software programs operating according to the following scenario. Data is loaded into RAM, which is a space in memory temporarily allocated to a certain program. The program does computation on the data, changing it in some way. Since the RAM allocated to the program is released when the program finishes its computation, the data must be written out to the hard disk if you want a permanent copy. In this sense, RAM is considered volatile memory. For all intents and purposes, the data in RAM disappear when the program finishes execution.

But what if the program you’re running is an audio processing program like Logic or Sonar through which you’re recording audio? The recording process causes audio data to be read into RAM. Why can’t Sonar or Logic just store all the audio data in RAM until you’re done working on it and write it out to the hard disk when you’ve finished? The problem is that a very large amount of data is generated as the recording is being done – 176,400 bytes per second for CD quality audio. For a recording of any significant length, it isn’t feasible to store all the audio data in RAM. Thus, audio samples are constantly pulled from the RAM buffer by the CPU and placed on a hard drive. This takes a significant amount of the CPU’s time.

In order to keep up efficiently with the constant stream of data, the hard drive needs a dedicated space in which to write the audio data. Most audio recording programs automatically claim a large portion of hard drive space when you start recording. The size of this hard drive allocation is usually controllable in the preferences of your audio software. For example, if your software is configured to allocate 500 MB of hard drive space for each audio stream, 500 MB is immediately claimed on the hard drive when you start recording, and no other software may write to that space. If your recording uses 100 MB, the operating system returns the remaining 400 MB of space to be available to other programs. It’s important to make sure you configure the software to claim an appropriate amount of space. If your recording ends up needing more than 500 MB, the software begins dynamically finding new chunks of space on the hard drive to put the extra data. In this situation, it’s possible for dropouts to happen if sufficient space cannot be found fast enough. The problem is compounded by multitrack recording. Imagine what happens if you’re recording 24 tracks of audio at one time. The software has to find 24 blocks of space on the hard drive that are 500 MB in size. That’s 12 GB of free space that needs to be immediately and exclusively available.

One way to avoid problems is to dedicate a separate hard drive other than your startup drive for audio capture and data storage. That way you know that no other program will attempt to use the space on that drive. You also need to have a hard drive that is large enough and fast enough to handle this amount of sustained data being written, and often read back. In today’s technology, at least a 7200 RPM, one terabyte dedicated external hard drive with a high-speed connection should be sufficient.

5.1.6 Digital Audio File Types

[aside]In our discussion of file types, we’ll use capital letters like AIFF and WAV to refer to different formats. Generally there is a corresponding suffix, called a file extension, used on file names – e.g., .aiff or .wav. However, in some cases, more than one suffix can refer to the same basic file type. For example, .aiff and .aif, and .aifc are all variants of the AIFF format.[/aside]

You saw in the previous section that the digital audio stream moves through various pieces of software and hardware during a recording session, but eventually you’re going to want to save the stream as a file in permanent storage. At this point you have to decide the format in which to save the file. Your choice depends on how and where you’re going to use the recording.

Audio file formats differ along a number of axes. They can be free or proprietary, platform-restricted or cross-platform, compressed or uncompressed, container files or simple audio files, and copy-protected or unprotected. (Copy protection is more commonly referred to as digital rights management or DRM.)

Proprietary file formats are controlled by a company or an organization. The particulars of a proprietary format and how the format is produced are not made public and their use is subject to patents. Some proprietary files formats are associated with commercial software for audio processing. Such files can be opened and used only in the software with which they’re associated. Some examples are CWP files for Cakewalk Sonar, SES for Adobe Audition multitrack sessions, AUP projects for Audacity, and PTF for Pro Tools. These are project file formats that include meta-information about the overall organization of an audio project. Other proprietary formats – e.g., MP3 – may have patent restrictions on their use, but they have openly documented standards and can be licensed for use on a variety of platforms. As an alternative, there exist some free, open source audio file formats, including OGG and FLAC.

Platform-restricted files can be used only under certain operating systems. For example, WMA files run under Windows, AIFF files run under Apple OS, and AU files run under Unix and Linux. The MP3 format is cross-platform. AAC is a cross-platform format that has become widely popular from its use on phones, pad computers, digital radio, and video game consoles.

[aside]Pulse code modulation was introduced by British scientist A. Reeves in the 1930s. Reeves patented PCM as a way of transmitting messages in “amplitude-dichotomized, time-quantized” form – what we now call “digital.”[/aside]

You can’t tell from the file extension whether or not a file is compressed, and if it is compressed, you can’t necessarily tell what compression algorithm (called a codec) was used. There are both compressed and uncompressed versions of WAV, AIFF, and AU files. When you save an audio file, you can choose which type you want.The basic format for uncompressed audio data is called PCM (pulse code modulation). The term pulse code modulation is derived from the way in which raw audio data is generated and communicated. That is, it is generated by the process of sampling and quantization described in Section 5.1 and communicated as binary data by electronic pulses representing 0s and 1s. WAV, AIFF, AU, RAW, and PCM files can store uncompressed audio data. RAW files contain only the audio data, without even a header on the file.

One basic reason that WAV and AIFF files come in compressed and uncompressed versions is that, in reality, these are container file formats rather than simple audio files. A container file wraps a simple audio file in a meta-format which specifies blocks of information that should be included in the header along with the size and position of chunks of data following the header. The container file may allow options for the format of the actual audio data, including whether or not it is compressed. If the audio is compressed, the system that tries to open and play the container file must have the appropriate codec in order to decompress and play it. AIFF files are container files based on a standardized format called IFF. WAV files are based on the RIFF format. MP3 is a container format that is part of the more general MPEG standard for audio and video. WMA is a Windows container format. OGG is an open source, cross-platform alternative.

In addition to audio data, container files can include information like the names of songs, artists, song genres, album names, copyrights, and other annotations. The metadata may itself be in a standardized format. For example, MP3 files use the ID3 format for metadata.

Compression is inherent in some container file types and optional in others. MP3, AAC, and WMA files are always compressed. Compression is important if one of your main considerations is the ability to store lots of files. Consider the size of a CD quality audio file, which consists of two channels of 44,100 samples per second with two bytes per sample. This gives

$$!2\ast \frac{44000\: samples}{sec}\ast \frac{2\: bytes}{sample}\ast 60\frac{sec}{min}\ast 5\: min=52920000\: bytes\approx 50.5\: MB$$

[aside]Why are 52,920,000 bytes equal to about 50.5 MB? You might expect a megabyte to be 1,000,000 bytes, but in the realm of computers, things are generally done in powers of 2. Thus, we use the following definitions:

kilo = 210 = 1024

mega = 220 = 1,048,576

You should become familiar with the following abbreviations:

[table th=”0″ width=”100%”]

kilobits,kb,210 bits

kilobytes,kB,210 bytes

megabits,Mb,220 bits

megabytes,MB,220 bytes[/table]

Based on these definitions, 52,920,000 bytes is converted to megabytes by dividing by 1,048,576 bytes.

Unfortunately, usage is not entirely consistent. You’ll sometimes see “kilo” assumed to be 1000 and “mega” assumed to be 1,000,000, e.g., in the specification of the storage capacity of a CD.[/aside]

A five minute piece of music, uncompressed, takes up over 50 MB of memory. MP3 and AAC compression can reduce this to less than a tenth of the original size. Thus, MP3 files are popular for portable music players, since compression makes it possible to store many more songs.

Compression algorithms are of two basic types: lossless or lossy. In the case of a lossless compression algorithm, no audio information is lost from compression to decompression. The audio information is compressed, making the file smaller for storage and transmission. When it is played or processed, it is decompressed, and the exact data that was originally recorded is restored. In the case of a lossy compression algorithm, it’s impossible to get back exactly the original data upon decompression. Examples of lossy compression formats are MP3, AAC, Ogg Vorbis, and the m-law and A-law compression used in AU files. Examples of lossless compression algorithms include FLAC (Free Lossless Audio Codec), Apple Lossless, MPEG-4 ALS (Audio Lossless Coding), Monkey’s Audio, and TTA (True Audio). More details of audio codecs are given in Section 5.2.1.

With the introduction of portable music players, copy-protected audio files became more prevalent. Apple introduced iTunes in 2001, allowing users to purchase and download music from their online store. The audio files, encoded in a proprietary version of the AAC format and using the.m4p file extension, were protected with Apple’s FairPlay DRM system. DRM enforces limits on where the file can be played and whether it can be shared or copied. In 2009, Apple lifted restrictions on music sold from its iTunes store, offering an unprotected .m4a file as an alternative to .m4p. Copy-protection is generally embedded within container file formats like MP3. WMA (Windows Media Audio) files are another example, based on the Advanced Systems Format (ASF) and providing DRM support.

Common audio file types are summarized in Table 5.1.

[table caption=”Table 5.1 Common audio file types” width=”90%”]

File Type,Platform,File Extensions,Compression,Container,Proprietary,DRM

PCM,cross,.pcm,no,no,no,no

RAW,cross,.raw,no,no,no,no

WAV,cross,.wav,Optional (lossy),”yes, RIFF format”,no,no

AIFF,Mac,”.aif, .aiff,”,no,”yes, IFF format”,no,no

AIFF-C,Mac,.aifc,”yes, with various codecs (lossy)”,”yes, IFF format”,no,

CAF,Mac,.caf,yes,yes,no,no

AU,Unix/Linux,”.au, .snd”,optional m-law (lossy),yes,no,no

MP3,cross,.mp3,MPEG (lossy),yes,license required for~~distribution or sale of~~codec but not for use,optional

AAC,cross,”.m4a, .m4b, .m4p,~~.m4v, .m4r, .3gp,~~.mp4, .aac”,AAC (lossy),more of a compression~~standard than a container;~~ADIF is container ,license required for~~distribution or sale of~~codec but not for use,

WMA,Windows,.wma,WMA (lossy),yes,yes,optional

OGG Vorbis,cross,”.ogg, .oga”,Vorbis (lossy),yes,”no, open source”,optional

FLAC,cross,.flac,FLAC (lossless),yes,”no, open source”,optional

[/table]

AIFF and WAV have been the most commonly used file types in recent years. CAF files are an extension of AIFF files without AIFF’s 4 GB size limit. This additional file size was needed for all the looping and other metadata used in GarageBand and Logic.

[wpfilebase tag=file id=21 tpl=supplement /]

All along the way as you work with digital audio, you’ll have to make choices about the format in which you save your files. A general strategy is this:

- When you’re processing the audio in a software environment such as Audition, Logic, or Pro Tools, save your work in the software’s proprietary format until you’re finished working on it. These formats retain meta-information about non-destructive processes – filters, EQ, etc. – applied to the audio as it plays. Non-destructive processes do not change the original audio samples. Thus, they are easily undone, and you can always go back and edit the audio data in other ways for other purposes if needed.

- The originally recorded audio is the best information you have, so it’s always good to keep an uncompressed copy of this. Generally, you should keep as much data as possible as you edit an audio file, retaining the highest bit depth and sampling rate appropriate for the work.

- At the end of processing, save the file in the format suitable for your platform of distribution (e.g., CD, DVD, web, or live performance). This may be compressed or uncompressed, depending on your purposes.